Dify x PUPU · Open, observable, extensible intelligent workflows and plugin ecosystem.

From Low‑Code to Pro‑Code: connect orchestration, engineering guardrails, ecosystem, triggers, and observability into a production loop.

| Part | Module | Focus |

|---|---|---|

| 01 | Breakthrough | Pain points · positioning · why now |

| 02 | Fusion | Visual DSL ↔ Pro‑Code · debugging view · collaboration |

| 03 | Extension | Plugins & Marketplace · tooling security · reuse |

| 04 | Connection | Triggers · types & matrix · hands-on cases |

| 05 | Observability | OpenTelemetry · multi-DB · cost/perf visibility |

| 06 | Summary | Evolution path · call to action |

Start from pain points; map Dify's role.

Top-100 GitHub repo by stars.

30 mins build: preprocess → retrieve → guardrail → return.

Chunk + clean, push vectors.

# python node\nchunks = split(doc, size=400)\nstore(chunks)

Top-k + safety filter.

Write back; Trace ID to APM/logs.

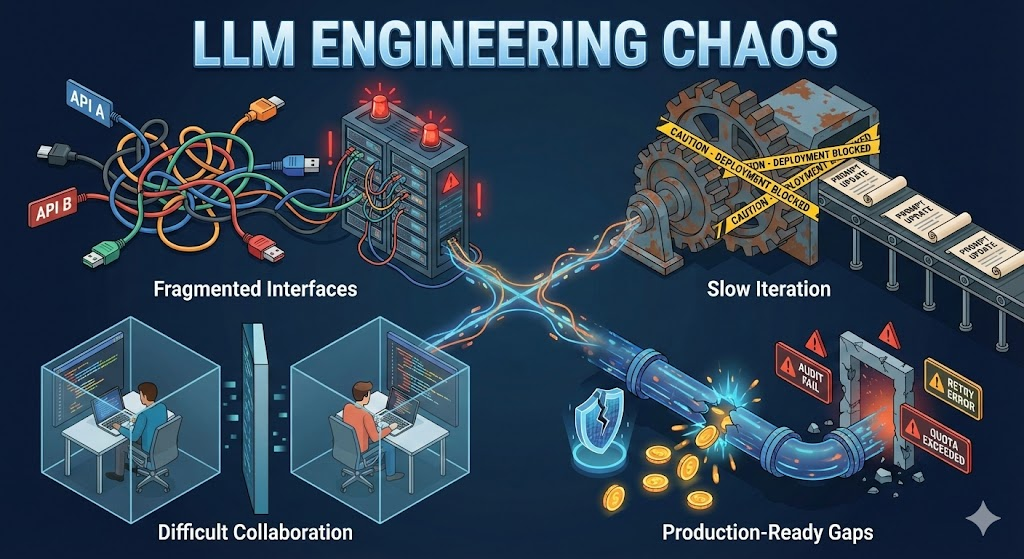

The surface is “missing pieces”; the root is “engineering gaps”.

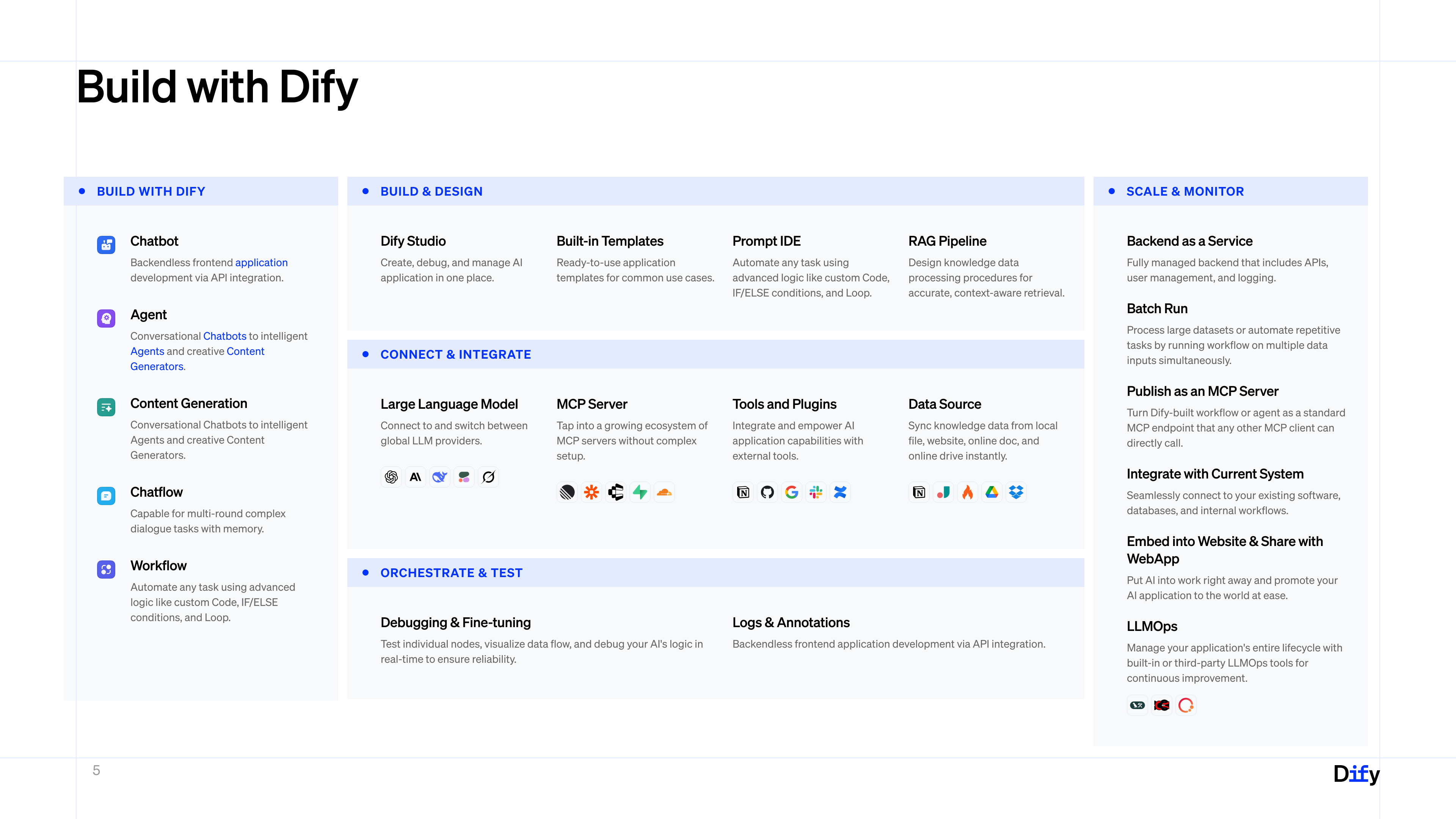

One-stop LLM app platform: build, orchestrate, operate.

Workflow / Prompt Studio / RAG pipeline.

Retries, branching, quotas, env isolation.

Plugins & tools to connect external systems.

Tracing / token / cost visibility keeps prod stable.

The Tipping Point: Compute, Models, and Data align.

From deterministic programming to probabilistic engineering.

The "Operating System" for LLM apps, connecting models to business.

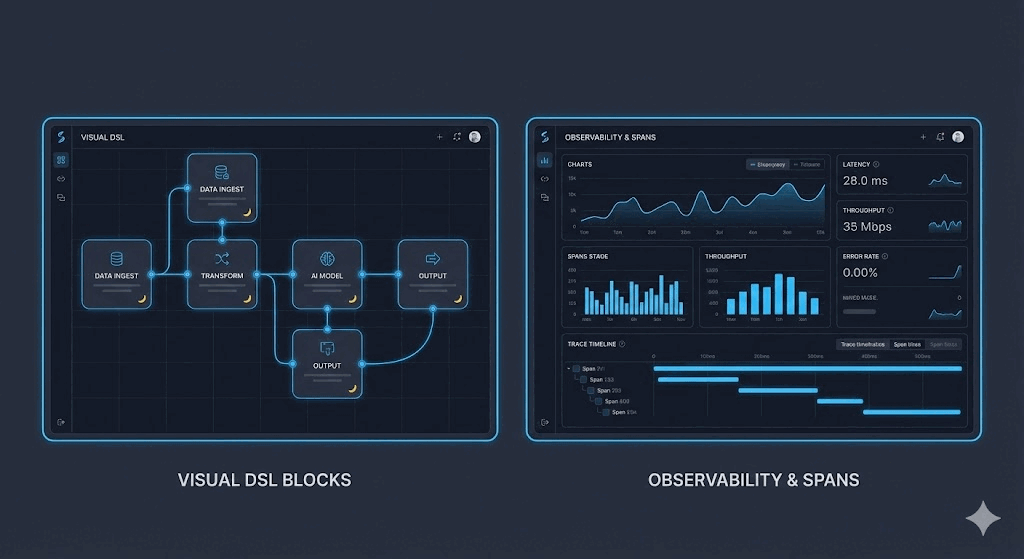

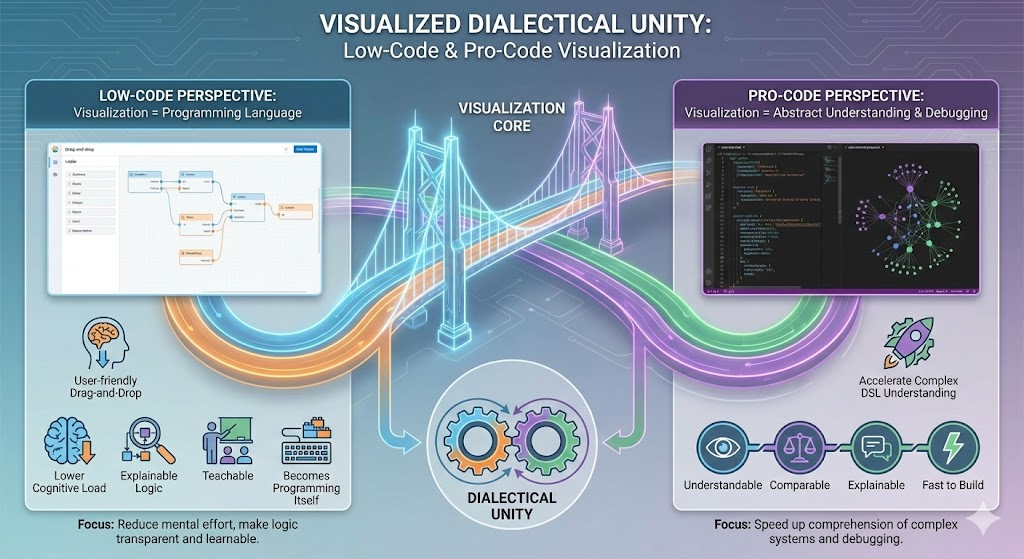

Visual DSL is an abstraction of pro engineering, not a toy.

Both camps need visualization, for different reasons:

| Low‑Code | Pro‑Code | |

|---|---|---|

| Role of visual | Programming language | Abstraction + debugging view |

| Primary goal | Lower cognitive load Explainable, teachable |

Understand complex DSLs faster Comparable, replayable |

| What it surfaces | Runnable logic | Engineering facts (Trace / Tokens / Variables) |

Same logic, two forms, co-evolving.

def handler(payload):

country = payload["country"]

score = risk_model.predict(payload)

if country == "US" and score < 0.2:

return {"route": "fast-lane"}

return {"route": "human-review", "score": score}Let visual DSL handle 80% glue logic; use pro-code for the 20% heavy lifting.

Branches, loops, RAG pipelines—align fast with biz needs.

Embed Python/Node scripts for data cleaning and math.

Trace IDs stitch logs; iterate prompts + code from evidence.

Node-level input/output diff with highlights.

Follow Trace ID to Jaeger / SLS.

trace_id=wf-1827 span=llm.call status=ok p95=180ms

Manage Prompts like Code: Versioning and Diffing.

Breaking silos between Prompt Engineers and Developers.

Beyond linear flows: Loops, Iterations, and Conditionals.

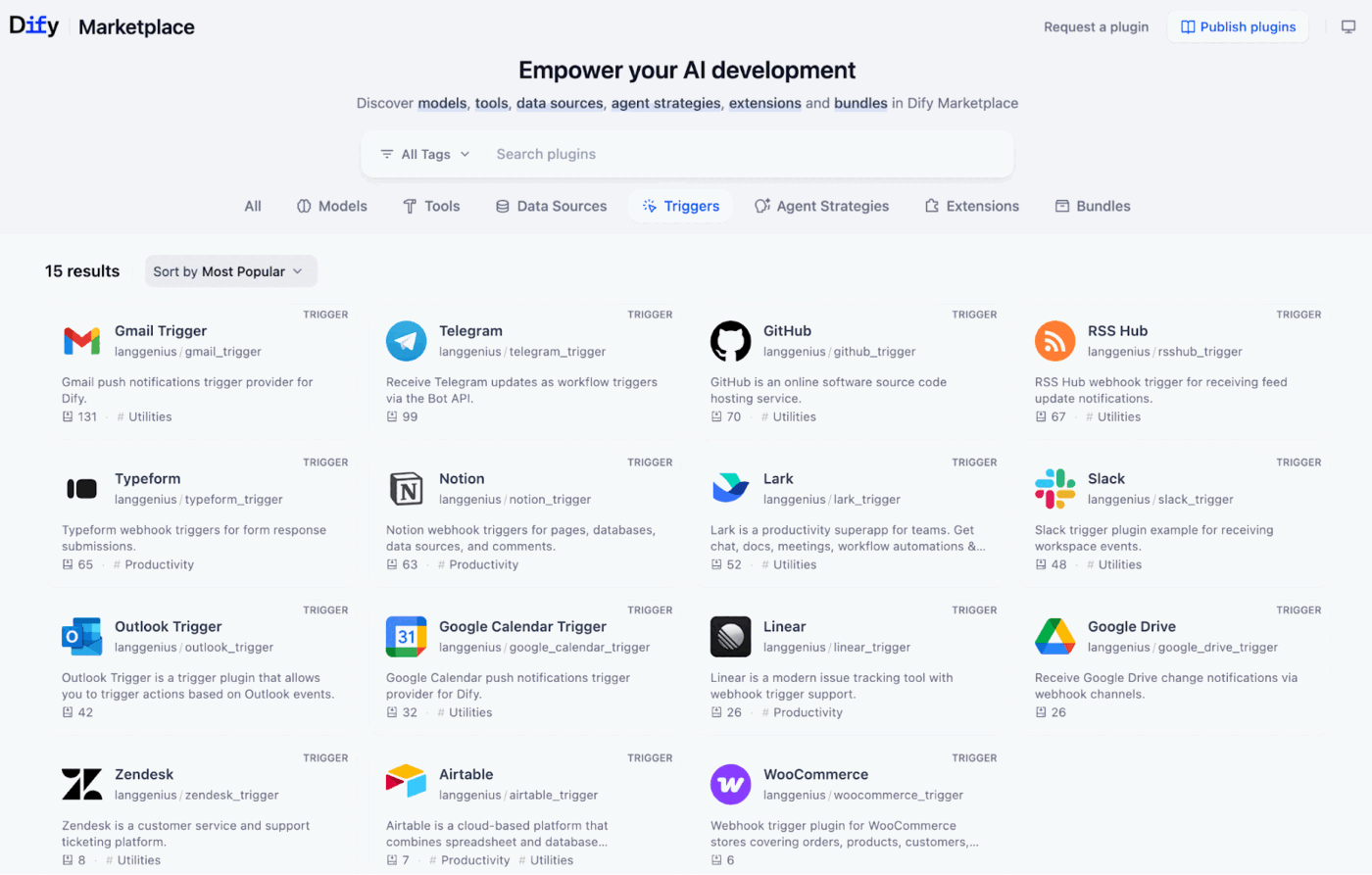

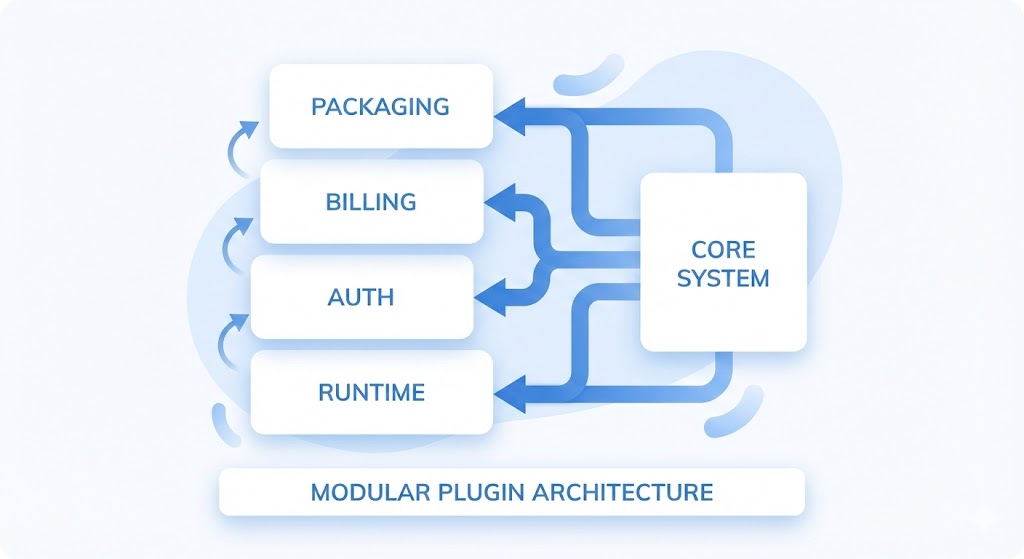

Marketplace & plugins make Dify platform-ready.

Standard APIs, auth, and multi-language runtimes.

Isolated execution with timeout and retry control.

API Key / OAuth / internal credentials.

Pluggable quotas and metering.

Marketplace publishing and versioning.

curl -X POST https://api.dify.ai/plugins/slack_bot \\

-H "Authorization: Bearer <token>" \\

-d '{

"channel_id": "ops-alert",

"text": "Build passed ✅",

"bot_token": "******"

}'171103.23plugin-9821 (jump to logs)manifest + schema

code node / runtime

local + sandbox

Marketplace / internal

"Definition is development": manifest + simple code.

bot_token, channel_id).Tool, send message via Slack SDK in _invoke.Trust no code. Isolation is key for platform stability.

3rd-party Plugin Code

Arbitrary Network Calls

High Resource Usage

Namespace Isolation

Resource Quotas

Network Whitelist

Agentic Behavior: Plugins are not just executors, but deciders.

def _invoke(self, params):

user_intent = analyze_intent(params['input'])

if user_intent == 'complex_task':

# Reverse call back to Dify API

response = dify_client.run_workflow(

workflow_id="wf-sub-task-001",

inputs={"query": params['input']},

user_id=params['user_id']

)

return {"result": response['data'], "delegated": True}

return {"result": simple_process(params), "delegated": False}

Connecting developers and enterprises for shared value.

Build high-quality tools

Publish to Marketplace

Revenue share

Distribution channel

Billing & Settlement

Security review

One-click install

SaaS Subscription

Solve business pain

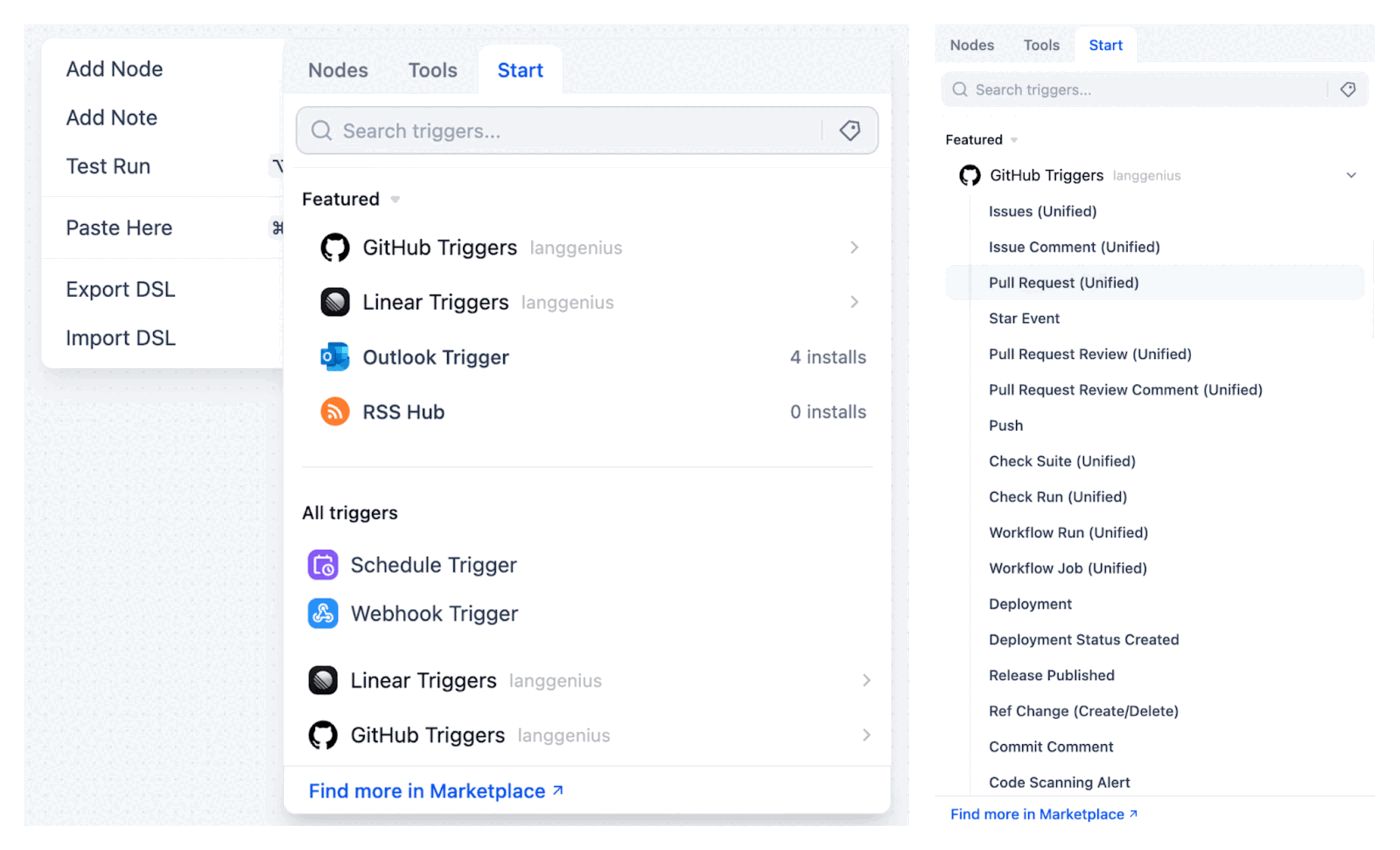

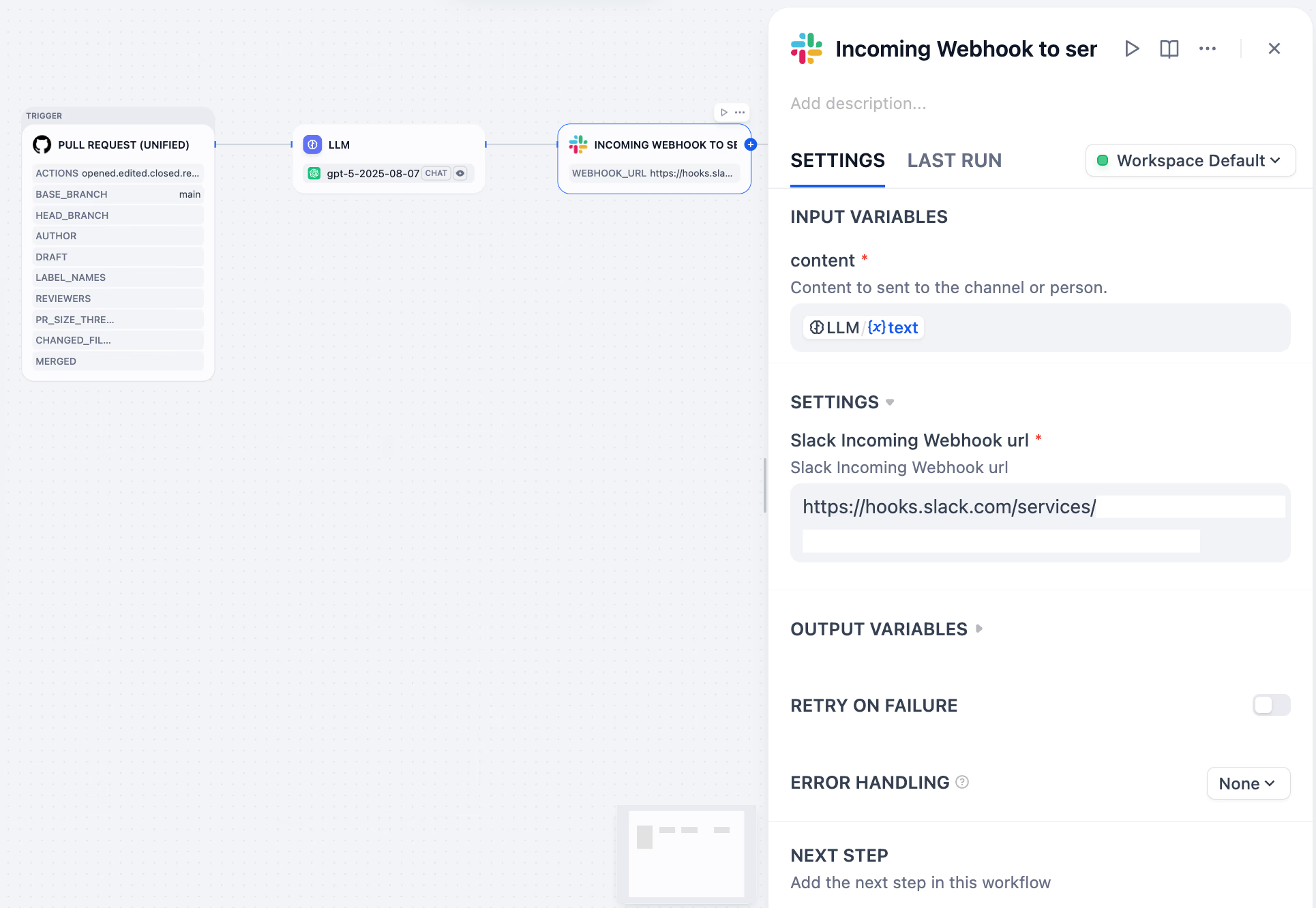

Triggers move apps from passive Q&A to proactive execution.

Webhook → Dify parses payload → LLM routes → action out.

Receive external event, verify signature.

Choose model/path based on context.

Call plugins/tools, write output.

Tracing + token/cost.

Bring “when to start” back to the orchestration layer.

| Without triggers | With triggers |

|---|---|

| Scattered entrypoints: buttons / cron / direct calls | Unified entry: event source → mapping → routing |

| Hard to govern: no trace, hard to debug | Governable: replayable, observable, auditable |

| Start conditions live in scripts/code | Start conditions live in the workflow canvas |

| Trigger logic hard to reuse | One canvas: branch first, merge later |

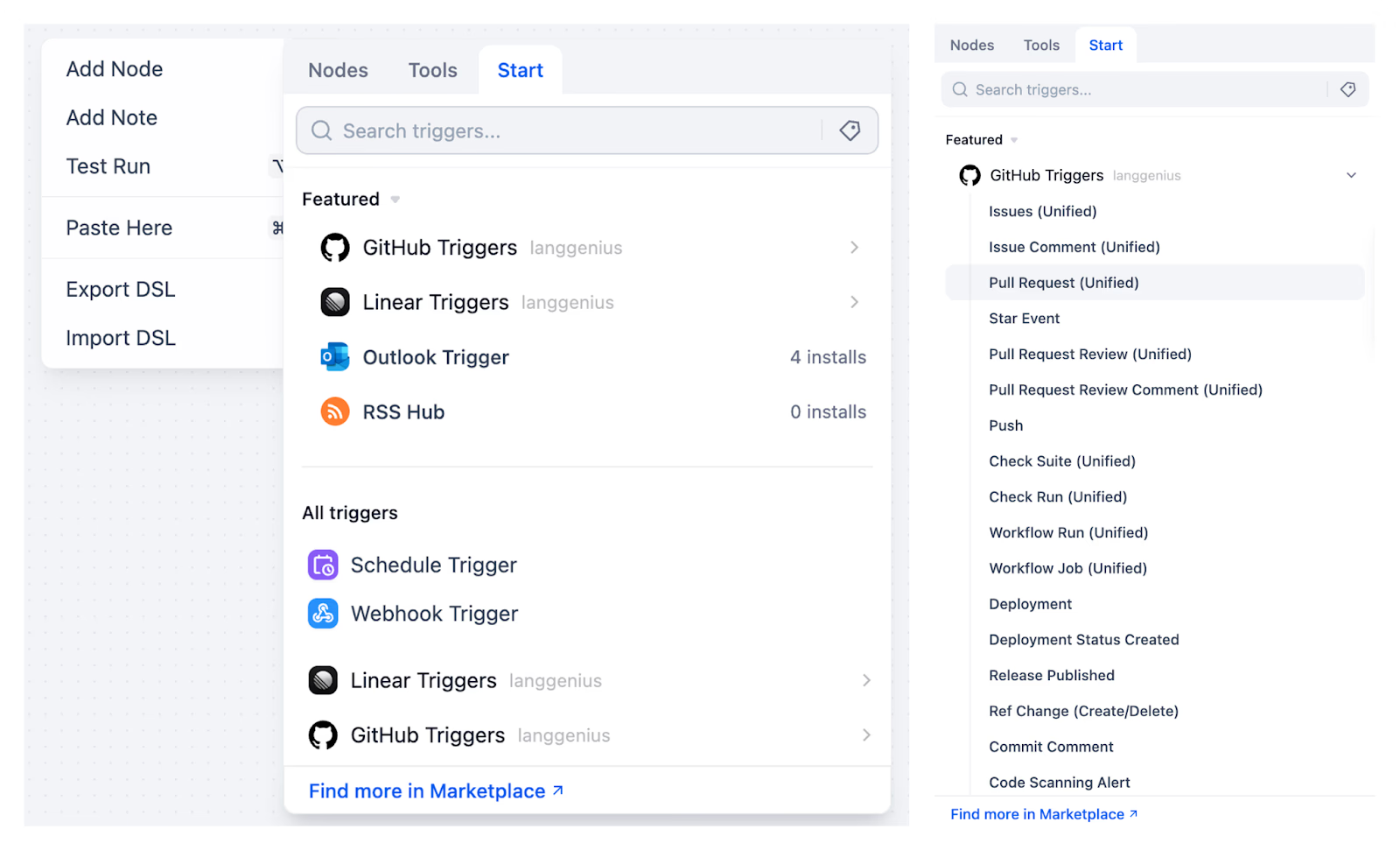

Covers schedules, third-party events, and in-house systems.

Run at fixed cadence: reports, cleanup, health checks.

Third-party events: PR updates, ticket changes, docs edits. One subscription can drive multiple workflows.

For your systems. Unique URL per trigger; query/header/body map to variables; optional callback with workflow result.

Plugin trigger + LLM = automated code review loop.

action/pull_request/repo/sender.Ensuring zero event loss and non-blocking execution.

From email ingress to auto-classification and human loop.

Customer emails support@company.com → Webhook fires Dify.

Analyze content: "Refund", "Technical Issue", or "General"?

Draft reply → Send to Slack for approval → Click to send.

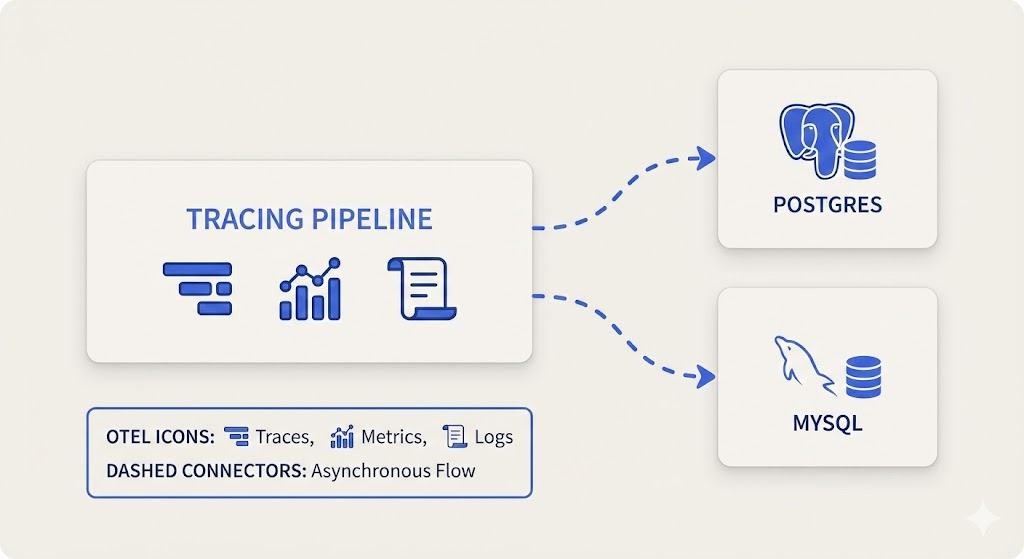

Transparent, traceable, portable engineering.

Not just logs—one trace across LLM + infra.

Dify ships fast—so probes must be non-invasive.

aliyun-instrument uses wrapt to hook into Dify's core methods at runtime.

WorkflowRunner.run) are swapped with a wrapper.wrapt ensures metadata (like __name__) is preserved, keeping it invisible to business logic.Per-span rates: LLM 20%, vector search 50%, critical transactions 100%.

Adjust prompt length + concurrency by queue depth and TPS.

Error >1% → sample 100% and enable debug logs.

Frontend looks fine but RAG returns empty; no app logs.

Workflow trace shows retriever runs fast and returns empty string.

Click "infrastructure link" on span to open SLS/Jaeger with TraceID.

One shard of vector DB timed out; retry policy missing. Add retry + fallback and redeploy.

DatabaseConfig adds PostgreSQL, MySQL, OceanBase.To ensure Schema compatibility across dialects, we introduced independent migration tests for MySQL.

Replacing dialect-specific Raw SQL with Python Helpers & ORM.

convert_datetime_to_date wraps truncation logic (Postgres

date_trunc vs MySQL date_format).

dataset_retrieval.py removes Raw SQL, using SQLAlchemy JSON

methods for type safety.

Granular operations: Know where every penny goes.

Unified orchestration + guardrails + ecosystem + triggers → observable, evolvable production AI.